Python is really a powerful language and with proper use of it anyone can make beautiful things. After studying Python I was really impressed by its power and to be more specific I really love how we can scrape any website easily with the help of python. Scraping is a process of extracting data from website by their html data. So I learned its basic and started scraping many website.

Recently I thought of creating something big through scraping but I was having no idea what to do. Then I came across with the site of MP transportation and I realized that they got so many data inside there website. The website is very simple, you open the site enter your transport number details and then search it. Then you will get result about your transport vehicle which includes type, color etc.

With python2.7 I created one script to scrape because with python 3.x there were less support to some modules. I decided to go for 'last' search type because with others I was facing some issues (may be site problem). For this I will have to search each input from 0000 - 9999 in short it makes around 10000 requests. We took 4 digits because it requires min 4 characters to enter. So yeah it was this large.

I created one program and started scrapping but then with 0000 input and 'last' type search I found that it scraped successfully and I got 1700+ data. But the problem was that it took 5 minutes to scrape 1 request. This happened because of server delay. It was not my problem but it was server's problem to search this much data from database. After realizing this I did some maths.

If 1 request take = 5 minutes,

then,

10000 requests = 50000 minutes = 833.33 hours = 35 days approx = 1 month 4 days

So in short I need my laptop to run for 1 month and 4 days to run continuously and trust me it's really a bad idea to do so. But is it worth doing it ?

If 1 request is giving approx 1000 data

10000 requests = 10,000,000

So yeah, hypothetically in 35 days I will be able to achieve 10 millions of data.

But still being a programmer we must do stuff as fast as possible and to achieve this one thing is sure that I need some power, memory, security etc. I tried Multiprocessing and multi threading but it was not working as expected

So the solution for this problem was getting your hand on some free servers. So I started searching some free website host company which supports python and thought of deploying my script over there. I tried this in pythonanywhere.com and in Heroku with the help of Flask framework but there was no success. I waited almost 15 days to decide what to do. Later I found one site scrapinghub.com which lets you deploy spider on cloud and rest they will take care of that so I went for it and started learning it.

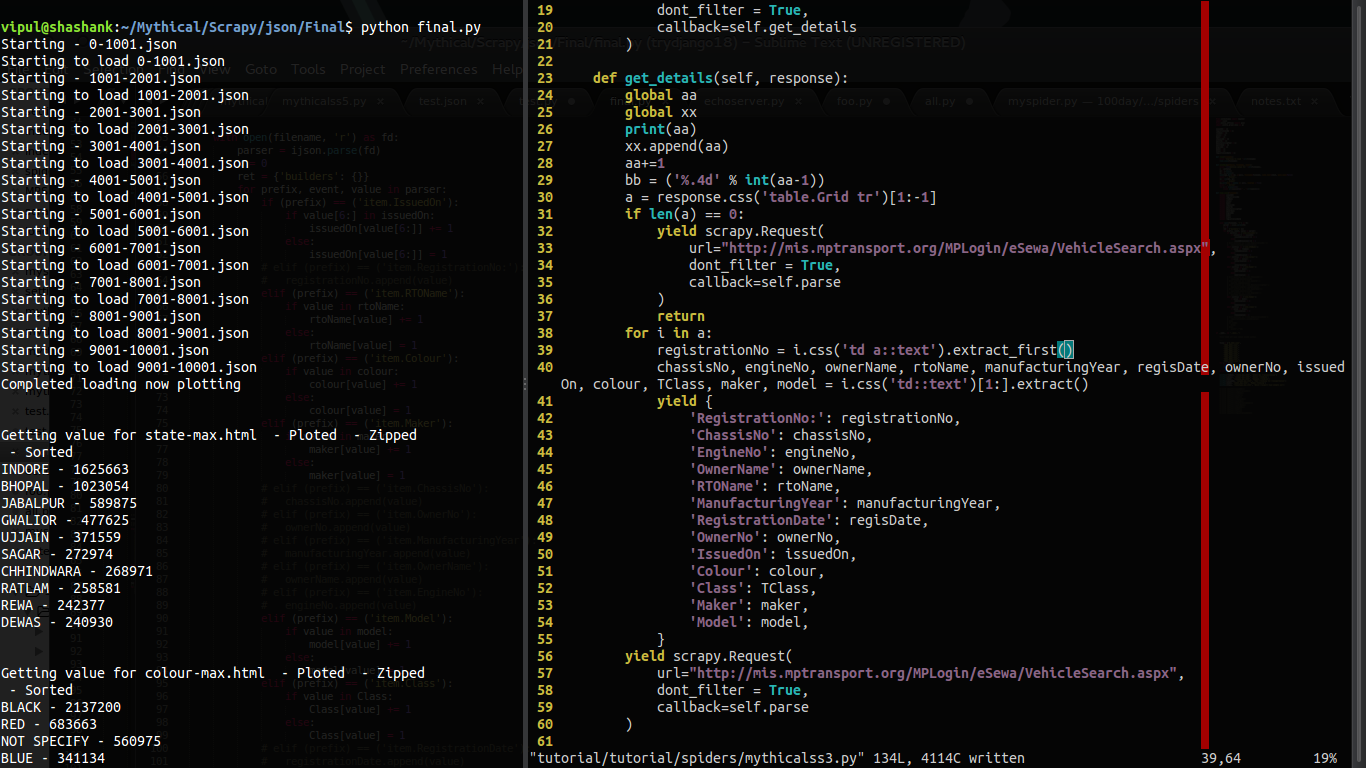

After that I learned how to use Scrapy and scrapinghub and I created another new program to scrape website with the help of Scrapy spiders. Source code for this is at the end of this page

Result:

Day 1 - 4,092,328 (4 millions of data in 17 hours)Id1 - Items - 1,134,421 (15 hours)

Id2 - Items - 1,025,282 (17 hours)

Id3 - Items - 983,367 (14 hours)

Id4 - Items - 949,228 (13 hours)

Size - 1.3 GB

Day 2 - 6,498,462 (6.4 millions of data in 17 hours)

(Created 2 more id's to boost my process)

Id1 - Items - 1,241,643 (17 hours)

Id2 - Items - 1000308 (15 hours)

Id3 - Items - 962863 (15 hours)

Id4 - Items - 1052844 (15 hours)

Id5 - Items - 1144686 (16 hours)

Id6 - Items - 1096118 (15 hours)

Size - 2.4 GB

Final Result

Total data collected: 10,590,790Total size: 3.7 GB

Time consumed: 34 hours

In just 34 hours by scraping we collected 10 millions of data which was estimated earlier. If we tried to do this process in old fashion like in laptop then it would have taken 1 month so we optimized it.

Now what ?

Since the size of our JSON files are huge. If we will be able to convert JSON file to database file then it would be really great but doing this will again require loads of time.

From JSON to Database

We can do 5 data per second,

for 10,000,000 = 2,000,000 seconds = 33333 minutes = 555 hours = 23 days.

Now that thing is not possible.

I tried even doing it through SQL script which is much better as compare to the previous script but still it will also take approx 20 days.

So we will use these data in JSON format, load it into python script and then do our maths over there. Loading one file may take approx 10 minutes but time is not an issue. The problem is that loading JSON file in python takes so much of memory. I mean a lot and since we are working on normal laptop then we need to think of something else. To avoid such problem I used ijson module in python. Its really a handy tool which iterates over JSON data rather than loading it all of sudden. But again with this power we need to sacrifice time a little but still its worth it.

Stats

In which state maximum transport is there ?- INDORE - 1625663

- BHOPAL - 1023054

- JABALPUR - 589875

- GWALIOR - 477625

- UJJAIN - 371559

- SAGAR - 272974

- CHHINDWARA - 268971

- RATLAM - 258581

- REWA - 242377

- DEWAS - 240930

Link: https://plot.ly/~shashank-sharma/19/

Which color does people prefer while buying any transport vehicle ?

- BLACK - 2137200

- RED - 683663

- NOT SPECIFY - 560975

- BLUE - 341134

- GREY - 288952

- WHITE - 283631

- SILVER - 255836

- RBK - 238896

- P BLACK - 177379

- PBK - 168518

Link: https://plot.ly/~shashank-sharma/11/

Which company have its maximum vehicle in MP ?

- HERO HONDA MOTORS - 2032369

- BAJAJ AUTO LTD - 1677867

- HERO MOTO CORP LTD. - 1563023

- TVS MOTOR CO. LTD. - 1130974

- HONDA MCY & SCOOTER P I LTD - 1102624

- MAHINDRA & MAHINDRA LTD - 463175

- TATA MOTORS LTD - 280684

- MARUTI SUZUKI INDIA LIMITED - 258392

- MARUTI UDYOG LTD - 249949

- ESCORTS LTD - 139231

Link: https://plot.ly/~shashank-sharma/13/

In which year does maximum vehicle were issued ?

- 2016 - 1406802

- 2014 - 1392520

- 2015 - 1166079

- 2013 - 964026

- 2011 - 845374

- 2012 - 734092

- 2010 - 716772

- 2009 - 607693

- 2008 - 481315

- 2007 - 471963

Link: https://plot.ly/~shashank-sharma/15/

Which transport vehicle is in majority ?

- SPLENDOR PLUS - 325878

- PLATINA - 302537

- HF DELUXE SELF CAST WHEEL - 254166

- ACTIVA (ELE AUTO & KICK START) - 216252

- TVS STAR CITY - 210397

- CD DLX - 188885

- DISCOVER DTS - SI - 180193

- PASSION PRO(DRM-SLF CASTWHEEL) - 163088

- ACTIVA 3G EAS KS CBS BS3 - 162542

- PASSION PLUS - 146584

Link: https://plot.ly/~shashank-sharma/17/

What type of vehicle does people have in majority ?

- MOTOR CYCLE - 6531708

- SCOOTER - 1291932

- MOTOR CAR - 881930

- TRACTOR - 687360

- GOODS TRUCK - 210932

- MOPED - 197450

- OMNI BUS FOR PRIVATE USE - 142478

- AUTO RICKSHAW PASSENGER - 124051

- TROLLY - 111358

- PICK UP VAN - 95238

Link: https://plot.ly/~shashank-sharma/9/

And that's how many more questions can be solved with the given data.

Thank you for reading till the end of this page. I hope by now you realized the real power of python.

Source code: https://github.com/shashank-sharma/MP-Transportation-Analysis

Loved it ? Check out my github blog here : http://shashank-sharma.github.io/